-

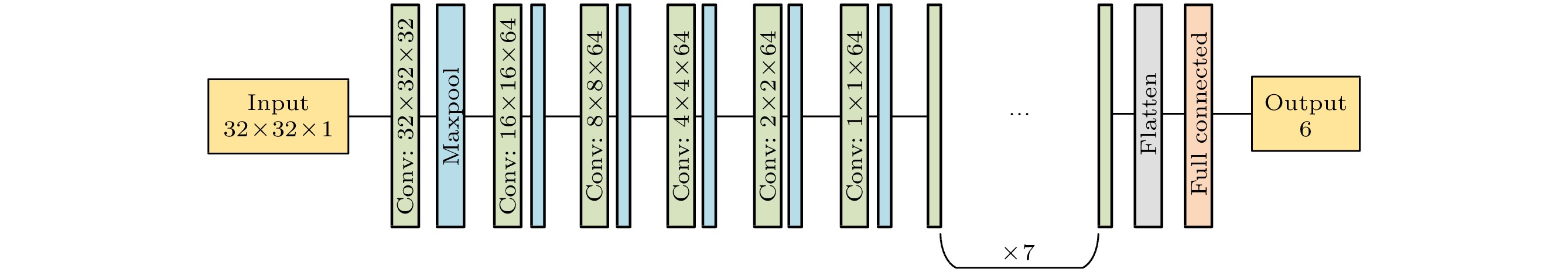

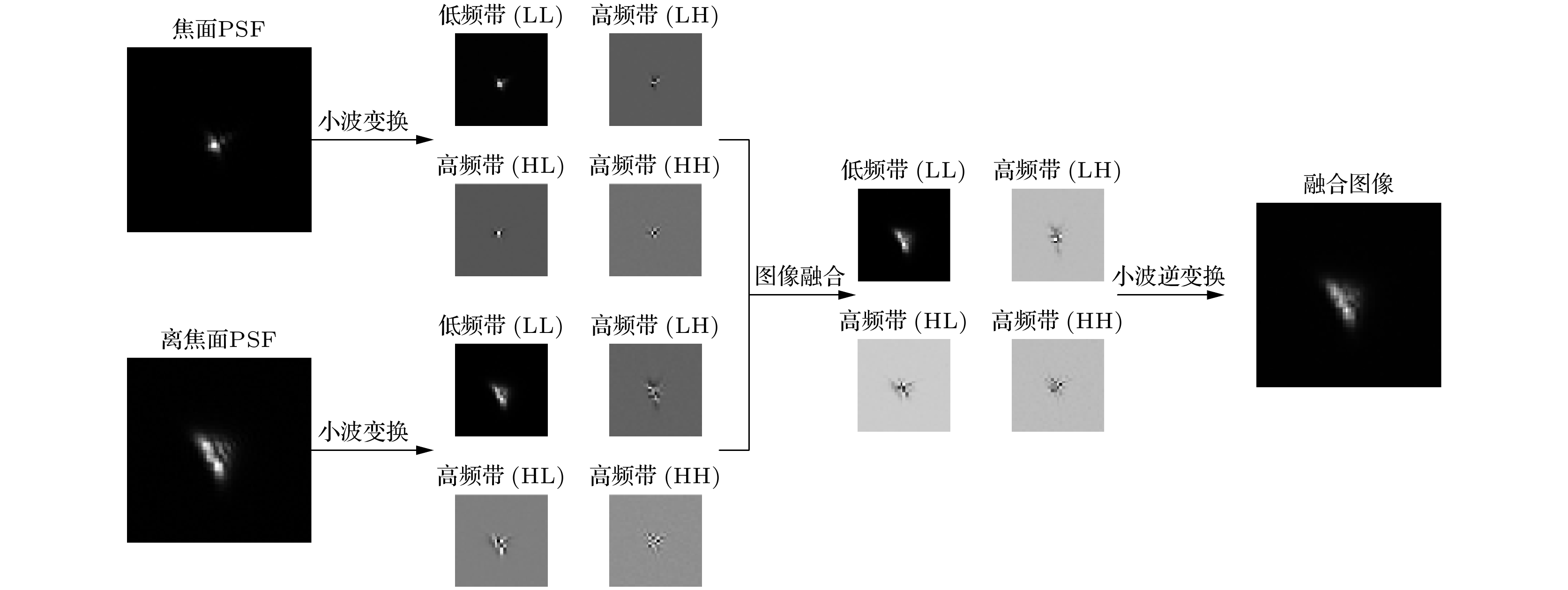

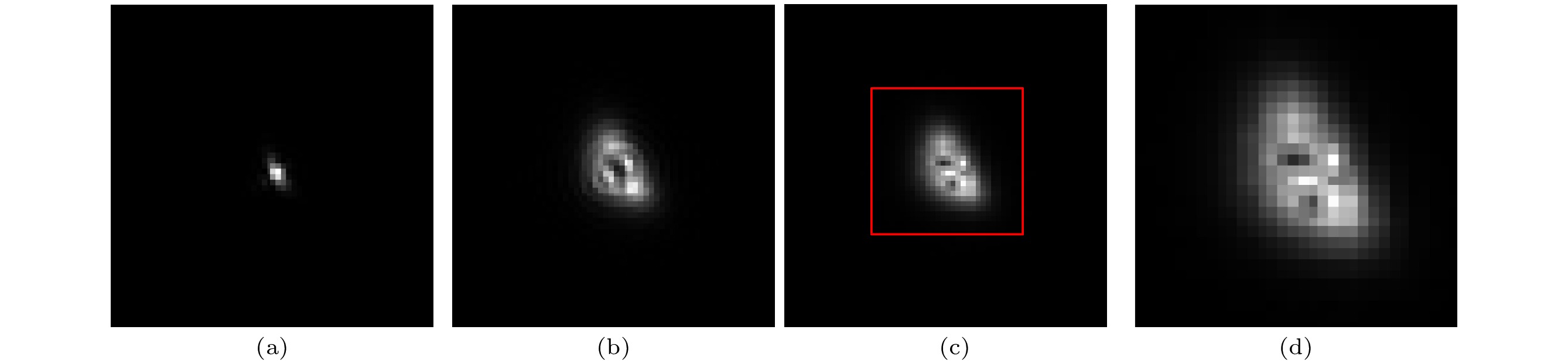

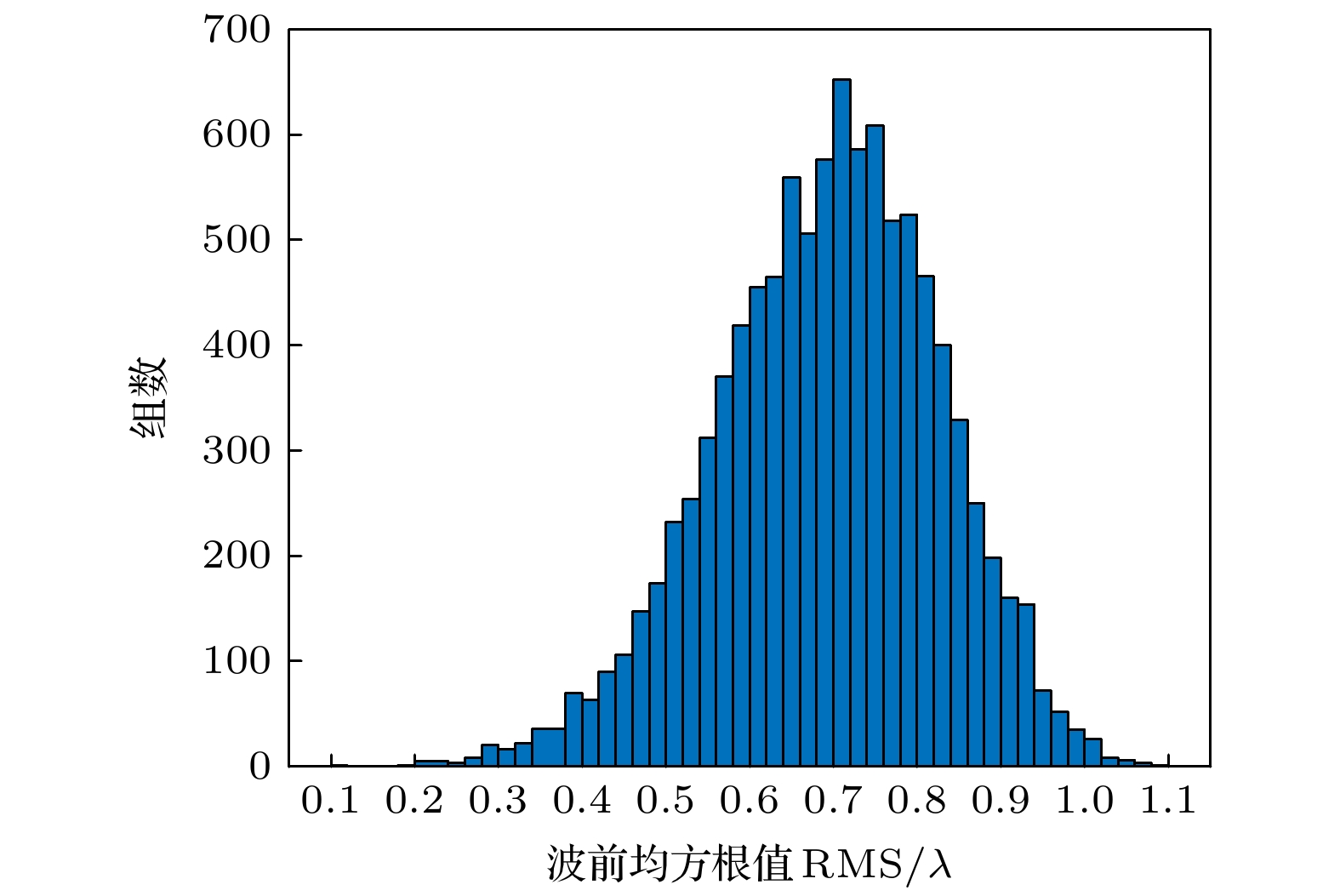

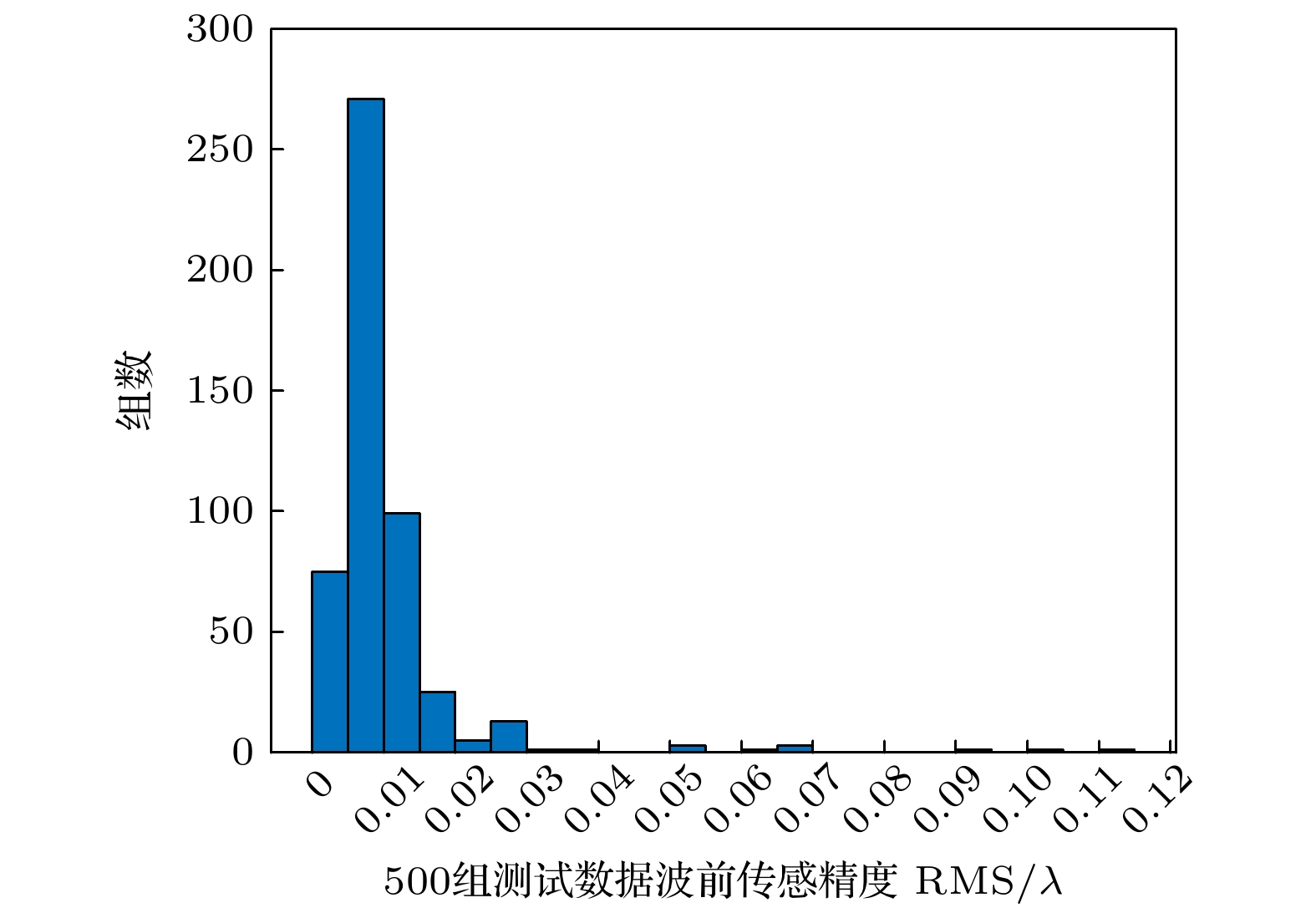

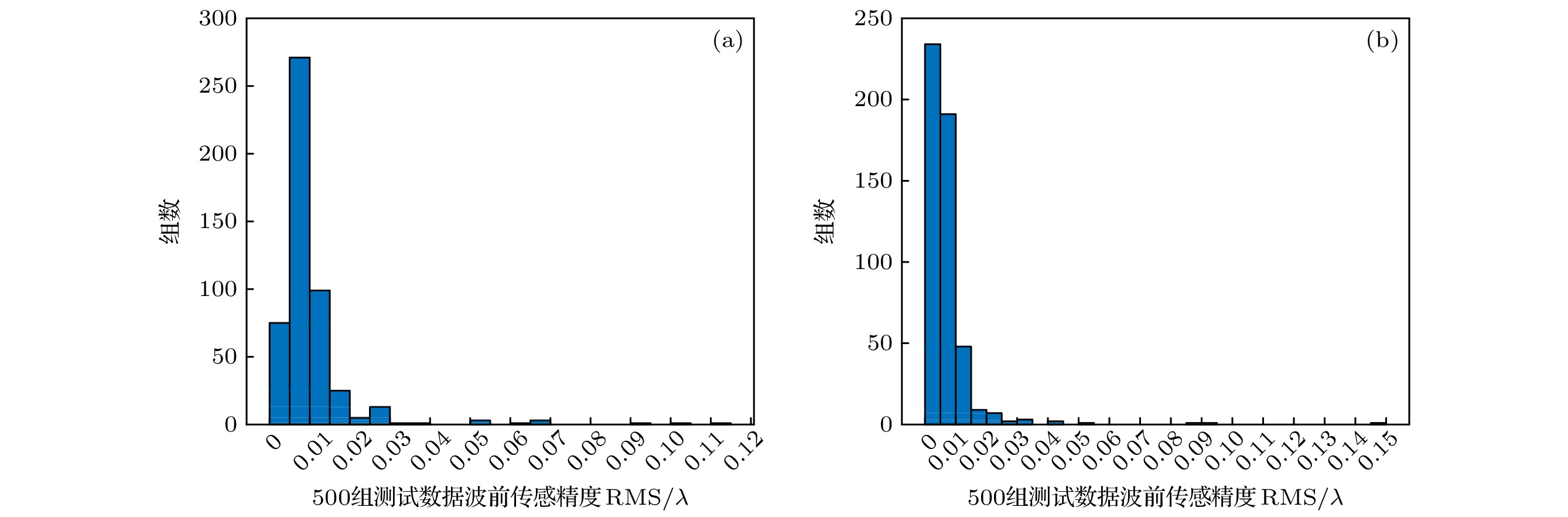

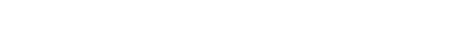

相位恢复法利用光波传输中某一(或某些)截面上的光强分布来传感系统波前, 其结构简单, 不易受震动及环境干扰, 被广泛应用于光学遥感和像差检测等领域. 传统相位恢复法采用迭代计算, 很难满足实时性要求, 且在一定程度上依赖于迭代转换或迭代优化初值. 为克服上述问题, 本文提出了一种基于卷积神经网络的相位恢复方法, 该方法采用基于小波变换的图像融合技术对焦面和离焦面图像进行融合处理, 可在不损失图像信息的同时简化卷积神经网络的输入. 网络模型训练完成后可依据输入的融合图像直接输出表征波前相位的4—9阶Zernike系数, 且波前传感精度均方根(root-mean-square, RMS)可达0.015λ, λ = 632.8 nm. 研究了噪声、离焦量误差和图像采样分辨率等因素对波前传感精度的影响, 验证了该方法对噪声具有一定鲁棒性, 相对离焦量误差在7.5%内时, 波前传感精度RMS仍可达0.05λ, 且随着图像采样分辨率的提升, 波前传感精度有所改善, 但训练时间成本随之增加. 此外, 分析了实际应用中, 当系统像差阶数与网络训练阶数略有差异时, 本方法所能实现的传感精度, 并给出了解决方案.The conventional phase retrieval wavefront sensing approaches mainly refer to a series of iterative algorithms, such as G-S algorithms, Y-G algorithms and error reduction algorithms. These methods use intensity information to calculate the wavefront phase. However, most of the traditional phase retrieval algorithms are difficult to meet the real-time requirements and depend on the iteration initial value used in iterative transformation or iterative optimization to some extent, so their practicalities are limited. To solve these problems, in this paper, a phase-diversity phase retrieval wavefront sensing method based on wavelet transform image fusion and convolutional neural network is proposed. Specifically, the image fusion method based on wavelet transform is used to fuse the point spread functions at the in-focus and defocus image planes, thereby simplifying the network inputs without losing the image information. The convolutional neural network (CNN) can directly extract image features and fit the required nonlinear mapping. In this paper, the CNN is utilized to establish the nonlinear mapping between the fusion images and wavefront distortions (represented by Zernike polynomials), that is, the fusion images are taken as the input data, and the corresponding Zernike coefficients as the output data. The network structure of the training in this paper has 22 layers, they are 1 input layer, 13 convolution layers, 6 pooling layers, 1 flatten layer and 1 full connection layer, that is, the output layer. The size of the convolution kernel is 3 × 3 and the step size is 1. The pooling method selects the maximum pooling and the size of the pooling kernel is 2 × 2. The activation function is ReLU, the optimization function is Adam, the loss function is the MSE, and the learning rate is 0.0001. The number of training data is 10000, which is divided into three parts: training set, validation set, and test set, accounting for 80%, 15% and 5% respectively. Trained CNN can directly output the Zernike coefficients of order 4–9 to a high precision, with these fusion images serving as the input, which is more in line with the real-time requirements. Abundant simulation experiments prove that the wavefront sensing precision is root-mean-square(RMS) 0.015λ, when the dynamic range of the wavefront is the aberration of low spatial frequency within 1.1λ of RMS value (i.e. the dynamic range of Zernike coefficients of order 4–9 is

$[- 0.5\lambda \,, \, 0.5\lambda]$ ). In practical application, according to the system aberration characteristics, the number of network output layer units can be changed and the network structure can be adjusted based on the method presented in this paper, thereby training the new network suitable for higher order aberration to realize high-precision wavefront sensing. It is also proved that the proposed method has certain robustness against noise, and when the relative defocus error is within 7.5%, the wavefront sensor accuracy is acceptable. With the improvement of image resolution, the wavefront sensing accuracy is improved, but the number of input data of the network also increases with the sampling rate increasing, and the time cost of network training increases accordingly.-

Keywords:

- phase retrieval /

- convolutional neural network /

- image fusion /

- sensing accuracy

[1] Roddier C, Roddier F 1993 Appl. Opt. 32 2992

Google Scholar

Google Scholar

[2] 类维政, 袁吕军, 苏志德, 康燕, 武中华 2020 光学学报 40 1312003-1

Google Scholar

Google Scholar

Lei W Z, Yuan L J, Su Z D, Kang Y, Wu Z H 2020 Acta Optica Sin. 40 1312003-1

Google Scholar

Google Scholar

[3] 逯力红, 张伟 2010 应用光学 31 685

Google Scholar

Google Scholar

Lu L H, Zhang W 2010 J. Appl. Opt. 31 685

Google Scholar

Google Scholar

[4] 吴宇列, 胡晓军, 戴一帆, 李圣怡 2009 机械工程学报 45 157

Google Scholar

Google Scholar

Wu Y L, Hu X J, Dai Y F, Li S Y 2009 Chin. J. Mech. Eng. 45 157

Google Scholar

Google Scholar

[5] Gerehberg R W, Saxton W O 1972 Optik 35 237

Google Scholar

Google Scholar

[6] Fienup J R 1982 Appl. Opt. 21 2758

Google Scholar

Google Scholar

[7] 杨国桢, 顾本源 1981 物理学报 30 410

Google Scholar

Google Scholar

Yang G Z, Gu B Y 1981 Acta Phys. Sin. 30 410

Google Scholar

Google Scholar

[8] Paine S W, Fienup J R 2018 Opt. Lett. 43 1235

Google Scholar

Google Scholar

[9] Nishizaki Y, Valdivia M, Horisaki R, Kitaguchi K, Saito M, Tanida J, Vera E 2019 Opt. Express 27 240

Google Scholar

Google Scholar

[10] Andersen T, Owner-Petersen M, Enmark A 2019 Opt. Lett. 44 4618

Google Scholar

Google Scholar

[11] Ju G H, Qi X, Ma H G, Yan C X 2018 Opt. Express 26 31767

Google Scholar

Google Scholar

[12] Qi X, Ju G H, Zhang C Y, Xu S Y 2019 Opt. Express 27 26102

Google Scholar

Google Scholar

[13] 毛珩 2008 博士学位论文 (北京: 北京理工大学)

Mao H 2008 Ph. D. Dissertation (Beijing: Beijing Insitute of Technology) (in Chinese)

[14] 王欣 2010 博士学位论文 (北京: 北京理工大学)

Wang X 2010 Ph. D. Dissertation (Beijing: Beijing Insitute of Technology) (in Chinese)

[15] 柏财通, 高志强, 李爱, 崔翛龙 2020 计算机工程

Google Scholar

Google Scholar

Bai C T, Gao Z Q, Li A, Cui X L 2020 Comput. Eng.

Google Scholar

Google Scholar

[16] 李彦冬, 郝宗波, 雷航 2016 计算机应用 36 2508

Google Scholar

Google Scholar

Li Y D, Hao Z B, Lei H 2016 J. Comput. Appl. 36 2508

Google Scholar

Google Scholar

[17] 徐启伟, 王佩佩, 曾镇佳, 黄泽斌, 周新星, 刘俊敏, 李瑛, 陈书青, 范滇元 2020 物理学报 69 014209

Google Scholar

Google Scholar

Xu Q W, Wang P P, Zeng Z J, Huang Z B, Zhou X X, Liu J M, Li Y, Chen S Q, Fan D Y 2020 Acta Phys. Sin. 69 014209

Google Scholar

Google Scholar

[18] 单宝忠, 王淑岩, 牛憨笨, 刘颂豪 2002 光学精密工程 10 318

Google Scholar

Google Scholar

Shan B Z, Wang S Y, Niu H B, Liu S H 2002 Opt. Precis. Eng. 10 318

Google Scholar

Google Scholar

[19] 王晨阳, 段倩倩, 周凯, 姚静, 苏敏, 傅意超, 纪俊羊, 洪鑫, 刘雪芹, 汪志勇 2020 物理学报 69 100701

Google Scholar

Google Scholar

Wang C Y, Duan Q Q, Zhou K, Yao J, Su M, Fu Y C, Ji J Y, Hong X, Liu X Q, Wang Z Y 2020 Acta Phys. Sin. 69 100701

Google Scholar

Google Scholar

[20] Kingma D P, Ba J 2014 Comput. Sci. 1412 6982

[21] 闫胜武 2012 硕士学位论文 (兰州: 兰州大学)

Yan S W 2012 M. S. Thesis (Lanzhou: Lanzhou University) (in Chinese)

[22] 孙爱华 2014 硕士学位论文 (青岛: 中国海洋大学)

Sun A H 2014 M. S. Thesis (Qingdao: Ocean University of China) (in Chinese)

[23] 蔡植善, 陈木生 2015 激光与光电子学进展 52 117

Google Scholar

Google Scholar

Cai Z S, Chen M S 2015 Las. Optoelect. Prog. 52 117

Google Scholar

Google Scholar

[24] 赵辽英, 马启良, 厉小润 2012 物理学报 61 194204

Google Scholar

Google Scholar

Zhao L Y, Ma Q L, Li X R 2012 Acta Phys. Sin. 61 194204

Google Scholar

Google Scholar

[25] 於时才, 吕艳琼 2009 计算机应用研究 26 390

Google Scholar

Google Scholar

Yu S C, Lv Y Q 2009 Appl. Res. Comput. 26 390

Google Scholar

Google Scholar

-

表 1 仿真系统参数

Table 1. Simulation system parameters.

透镜焦距/mm 入瞳直径/mm 波长/nm 离焦距离/mm 150 10 632.8 4 表 2 系统存在不同阶像差时本文方法的波前传感精度

Table 2. The wavefront sensing accuracy of the proposed method when the system has different order of aberration.

像差阶数 4—7阶 4—8阶 4—10阶 4—11阶 传感精度RMS/$\lambda $ 0.010 0.012 0.015 0.100 表 3 不同离焦量误差下, 本文方法的传感精度

Table 3. The sensing accuracy of the proposed method under different defocusing errors.

相对离焦量误差/% 2.5 5.0 7.5 10.0 传感精度RMS/$\lambda $ 0.022 0.035 0.050 0.065 表 4 噪声对传感精度的影响

Table 4. The influence of noise on the sensing accuracy.

信噪比/dB 50 40 35 30 25 传感精度RMS/$\lambda $ 0.015 0.015 0.015 0.020 0.060 -

[1] Roddier C, Roddier F 1993 Appl. Opt. 32 2992

Google Scholar

Google Scholar

[2] 类维政, 袁吕军, 苏志德, 康燕, 武中华 2020 光学学报 40 1312003-1

Google Scholar

Google Scholar

Lei W Z, Yuan L J, Su Z D, Kang Y, Wu Z H 2020 Acta Optica Sin. 40 1312003-1

Google Scholar

Google Scholar

[3] 逯力红, 张伟 2010 应用光学 31 685

Google Scholar

Google Scholar

Lu L H, Zhang W 2010 J. Appl. Opt. 31 685

Google Scholar

Google Scholar

[4] 吴宇列, 胡晓军, 戴一帆, 李圣怡 2009 机械工程学报 45 157

Google Scholar

Google Scholar

Wu Y L, Hu X J, Dai Y F, Li S Y 2009 Chin. J. Mech. Eng. 45 157

Google Scholar

Google Scholar

[5] Gerehberg R W, Saxton W O 1972 Optik 35 237

Google Scholar

Google Scholar

[6] Fienup J R 1982 Appl. Opt. 21 2758

Google Scholar

Google Scholar

[7] 杨国桢, 顾本源 1981 物理学报 30 410

Google Scholar

Google Scholar

Yang G Z, Gu B Y 1981 Acta Phys. Sin. 30 410

Google Scholar

Google Scholar

[8] Paine S W, Fienup J R 2018 Opt. Lett. 43 1235

Google Scholar

Google Scholar

[9] Nishizaki Y, Valdivia M, Horisaki R, Kitaguchi K, Saito M, Tanida J, Vera E 2019 Opt. Express 27 240

Google Scholar

Google Scholar

[10] Andersen T, Owner-Petersen M, Enmark A 2019 Opt. Lett. 44 4618

Google Scholar

Google Scholar

[11] Ju G H, Qi X, Ma H G, Yan C X 2018 Opt. Express 26 31767

Google Scholar

Google Scholar

[12] Qi X, Ju G H, Zhang C Y, Xu S Y 2019 Opt. Express 27 26102

Google Scholar

Google Scholar

[13] 毛珩 2008 博士学位论文 (北京: 北京理工大学)

Mao H 2008 Ph. D. Dissertation (Beijing: Beijing Insitute of Technology) (in Chinese)

[14] 王欣 2010 博士学位论文 (北京: 北京理工大学)

Wang X 2010 Ph. D. Dissertation (Beijing: Beijing Insitute of Technology) (in Chinese)

[15] 柏财通, 高志强, 李爱, 崔翛龙 2020 计算机工程

Google Scholar

Google Scholar

Bai C T, Gao Z Q, Li A, Cui X L 2020 Comput. Eng.

Google Scholar

Google Scholar

[16] 李彦冬, 郝宗波, 雷航 2016 计算机应用 36 2508

Google Scholar

Google Scholar

Li Y D, Hao Z B, Lei H 2016 J. Comput. Appl. 36 2508

Google Scholar

Google Scholar

[17] 徐启伟, 王佩佩, 曾镇佳, 黄泽斌, 周新星, 刘俊敏, 李瑛, 陈书青, 范滇元 2020 物理学报 69 014209

Google Scholar

Google Scholar

Xu Q W, Wang P P, Zeng Z J, Huang Z B, Zhou X X, Liu J M, Li Y, Chen S Q, Fan D Y 2020 Acta Phys. Sin. 69 014209

Google Scholar

Google Scholar

[18] 单宝忠, 王淑岩, 牛憨笨, 刘颂豪 2002 光学精密工程 10 318

Google Scholar

Google Scholar

Shan B Z, Wang S Y, Niu H B, Liu S H 2002 Opt. Precis. Eng. 10 318

Google Scholar

Google Scholar

[19] 王晨阳, 段倩倩, 周凯, 姚静, 苏敏, 傅意超, 纪俊羊, 洪鑫, 刘雪芹, 汪志勇 2020 物理学报 69 100701

Google Scholar

Google Scholar

Wang C Y, Duan Q Q, Zhou K, Yao J, Su M, Fu Y C, Ji J Y, Hong X, Liu X Q, Wang Z Y 2020 Acta Phys. Sin. 69 100701

Google Scholar

Google Scholar

[20] Kingma D P, Ba J 2014 Comput. Sci. 1412 6982

[21] 闫胜武 2012 硕士学位论文 (兰州: 兰州大学)

Yan S W 2012 M. S. Thesis (Lanzhou: Lanzhou University) (in Chinese)

[22] 孙爱华 2014 硕士学位论文 (青岛: 中国海洋大学)

Sun A H 2014 M. S. Thesis (Qingdao: Ocean University of China) (in Chinese)

[23] 蔡植善, 陈木生 2015 激光与光电子学进展 52 117

Google Scholar

Google Scholar

Cai Z S, Chen M S 2015 Las. Optoelect. Prog. 52 117

Google Scholar

Google Scholar

[24] 赵辽英, 马启良, 厉小润 2012 物理学报 61 194204

Google Scholar

Google Scholar

Zhao L Y, Ma Q L, Li X R 2012 Acta Phys. Sin. 61 194204

Google Scholar

Google Scholar

[25] 於时才, 吕艳琼 2009 计算机应用研究 26 390

Google Scholar

Google Scholar

Yu S C, Lv Y Q 2009 Appl. Res. Comput. 26 390

Google Scholar

Google Scholar

计量

- 文章访问数: 11897

- PDF下载量: 255

- 被引次数: 0

下载:

下载: